|

You could change this piece of code if you wanted to transform the data to a different datatype at the time of entry. With my files I am making everything into a varchar or nvarchar and the computation to the correct datatypes happens at another point after the data has been transformed in. The DataTypeDescription can be a varchar or an nvarchar. These fields can be null or 0 for fields that are delimited. There is a ParseStartPoint and ParseEndPoint for files that are fixed length. The first field in the table is the primary key, the second two match it up to the import file (not zip) it associated with, and the FieldName corresponds to the name of the field that will be coming through. You will most likely populate this with data dictionary information from your data provider. This ETL_ImportDictionary is the data dictionary to your file. In this example there is only one file per zip: ETLImportId The delimiter is what is delimiting the file and the DestFieldToIndex indicates what field to index on the DestTableName (final output). If it is not, it is assumed to be a fixed length file. The delimited indicator indicates whether this is a delimited file or not. Data is moved to the final table after parsing or delimiting and holds the data in a format for business processing. Once the data has been imported in raw format (not parsed or delimited) it is then moved to the DestTableName, which is the name of the final table for the data. The LoadTableName is the name of the table where the data from the file will be imported to. The ImportFilename is the actual name of the file within the zip file. The following ETL_ImportFiles table now describes the files within each of the zip files in the ETL_ZipFiles table. The FTP location is where to retrieve the file from. The active indicator is helpful in testing when you are only testing one file as it allows you to turn off files you don't need to test. The Active indicator indicates what files to run. The Run Order is the order in which the files will be run. The description and server location are self explanatory. There is a file prefix since each file starts with a prefix and then has a date appended to it. I give each file a filecode for lookup references in code (instead of hard coding a number into my sprocs). The ETL_ZipFiles table contains all the zip file information received from our data provider. Depending on the data that we need, we may be extracting one file from a zip or many.įirst, let's start our design with the tables we will need for our package parameters. Of the files we do need to download, some consist of fixed length data, while others include delimited data that needs to be parsed.

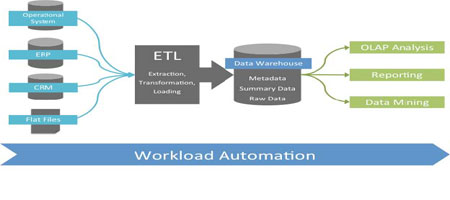

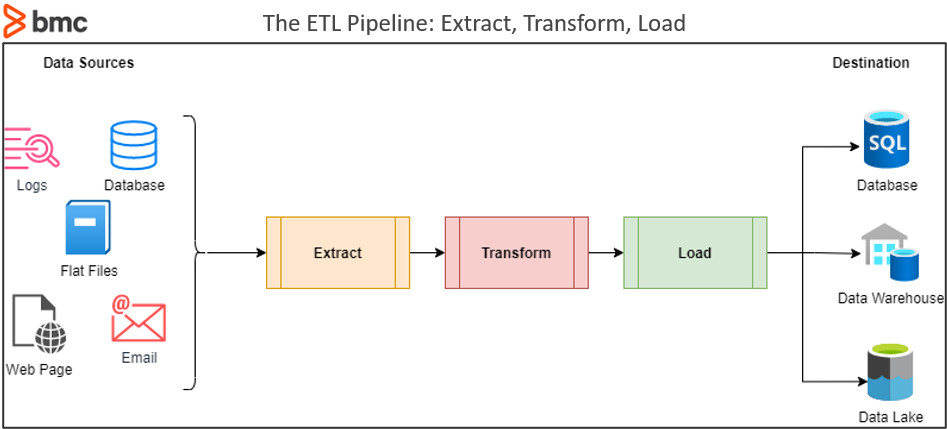

These include copyright information, data dictionaries (which do not change) and file processed date information. Because the provider errs on the side of providing more information than less, it gives us many files which tend to be redundant and are not necessary for us to load. These files are provided in zip format and contain multiple flat files within the zip. The data provider has provided us with documentation of the data that it is providing to us so we know what format we are receiving. Weekly at my organization we download files from a data provider. I decided to make the entire process configurable and to use SSIS for what I really needed it for which was the extracting, unzipping and importing of the data. New files would be provided by the data provider and that meant time to change the package and test it against all file downloads we were receiving. The package became difficult to test because it needed to be modified for each test. We had a traditional file load set up using SSIS where all of the configuration data was stored in the package. My initial idea for designing this download and transform process was instigated by new files that were being offered from our data provider and a slow testing process associated with testing the package.

All business logic is processed in the database while SSIS performs basic extraction functionality. By storing all criteria in the database, the SSIS package no longer has to be modified for new types of files, new imports or to test a subset (say one file out of five) of a load. The data definition and all criteria for what needs to be loaded, extracted, and how it will be stored is saved and retrieved from the database.

In my design, the SSIS package downloads the flat file from an ftp site, unzips it, extracts it and then loads the data into the database. All load criteria for what to load, where to store the data, and transformation of the data happen in the database. This article is about how to dynamically load flat files using SSIS as a shell for basic extraction functionality while all transformations and business logic run in the database.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed